Upcoming Events!

- Categories

- Tags -Course s Fall 2015 -Course s-Fall-14 -Courses- Spring-15 Aerospace & Electronic Systems Antennas & Propagation Communications Computational Intelligence Computer con Consultants' N etwork Consumer Ele ctronics Control Sy stems Courses - Spr ing 2016 Education el

Electromagnetic Compatibility Electron Devices engineering < span data-term='41' > Engineering in Medicine and Biolo gy Societies Entrep reneurs' Network Ge o-Science & Remote Sensing Industry Applications Society Life Members Magnetics Member Development Membership Development Microsystems Microwave Theory and Techniques Nuclear & Plasma Sciences PACE Photonics Power Electronics Power Energy Society re Reliability Robotics and Automati on sensors council sig Signal Processing < span data-term='66' > Society for Social Implications o f Technology Solid State Circuits tech nology & management tem Women in Engin eering Young Profes sionals

Boston SMTA/IMAP S/IEEE Boston/New Hampshire/Providence Reliability Chapter Event

Gr eetings fellow Reliability enthusiasts!

The IEEE Reliability Societ y’s Joint Boston/New Hampshire/Providence Chapter is pleased to be co-host ing with Boston SMTA and iMAPS an evening meeting at Advanced MicroAnalyti cal in Salem\, NH. This will include dinner\, a discussion of analytical tools and techniques\, and a facilities tour.

To register\, please use this Link to SMTA Registr ation.

Hope to see you there!

Best regards\, James P. (J ay) Yakura\, Chair\, Joint Boston/New Hampshire/Providence Chapter\, IEEE Reliability Society

Overview:

This meeting will cover laboratory techniques and test methods for a variety of samples from components to PCBA’s and whole commercial devices. A number of famil iar analytical techniques will be discussed and demonstrated related to re liability and process inspection\, including visual inspection and standar d techniques like Ball Shear\, X-ray Imaging\, CSAM and other standard com posite methods. Additionally\, more specialized approaches to Failure Anal ysis\, research\, and process development will be demonstrated including u se of multiple types of electron microscopes\, spectroscopy\, and Focused Ion Beam analysis for 3D examination of devices on a nano-scale.

Ad vanced MicroAnalytical is part of the EMSL Analytical network. Coming up o n 10 years this May\, Advanced MicroAnalytical has been delivering in-dept h scientific support for a wide range of industries and sample types. Our staff and analytical capabilities are primed to provide leading edge suppo rt for industries including\, including manufacturing\, microelectronics\, nano-fabrication\, aerospace and defense\, medical devices and more. This meeting will demonstrate the type of work flow associated with finding an d understanding problems that challenge attending members – from initial p roduct development choices\, through reliability\, product support\, and c ustomer facing FA efforts. Advanced MicroAnalytical is located in the hub of technology on the East Coast just north of Boston\, MA\, in Salem NH.

Cost: Members: $25\, Non-members: $30\, Students/R etired: $10

***IEEE and iMAPs Members please contact Mike Jansen mjansen@macktech.com to receive pr omo code for discounted rate***

If you are not an SMTA member\, you may click “Continue as Guest” on the registration page.

Ho sts: Boston SMTA\, iMAPS\, & IEEE Boston/Providence/New Hampshir e Jt Sections\,RL07

Registration – Link to SMTA Registration

Speakers:

Jared Kelly – A dvanced MicroAnalytical

Hal Winslow – Symbotic

Chuck Lemieux – Advanced MicroAnalytical

Agenda:

5:30 PM – Registration

6:00 PM – Dinner

6:30 PM – Presentation

7:30 PM – Tour

9:00 PM – Adjourn

If you no longer wish to receive these meeting announcements\, please send an email to James P. Yakura with “Unsubscribe” in the subject line.

Microwave Theory and Technology Societ y

Refreshments (pizza and beverages) with socialization will start at 5:30 PM.

The technical talk will be from 6:00 – 7:00 PM.

Anthony Grb ic of University of Michigan

The research area of metamaterials has captured the imagination of scientists and engineers over the past two decades by allow ing unprecedented control of electromagnetic fields. The extreme manipulat ion of fields has been made possible by the fine spatial control and wide range of material properties that can be attained through subwavelength st ructuring. Research in this area has resulted in devices which overcome th e diffraction limit\, render objects invisible\, and even break time rever sal symmetry. It has also led to flattened and conformal optical systems a nd ultra-thin antennas. This seminar will identify recent advances in the growing area of metamaterials\, with a focus on metasurfaces: two dimensio nal metamaterials. The talk will explain what they are\, the promise they hold\, and how these field-transforming surfaces are forcing the rethinkin g of electromagnetic/optical design.

Electromagnetic metasurfaces a re finely patterned surfaces whose intricate patterns/textures dictate the ir electromagnetic properties. Conventional field-shaping devices\, such a s lenses in prescription eye glasses or a magnifying glass\, require thick ness (propagation length) to manipulate electromagnetic waves through inte rference. In contrast\, metasurfaces manipulate electromagnetic waves acro ss negligible thicknesses through surface interactions\, by impressing abr upt phase and amplitude discontinuities onto a wavefront. The role of the visible (propagating) and invisible (evanescent) spectrum in establishing these discontinuities will be explained. In addition\, it will be shown ho w metasurfaces allow the complete transformation of fields across a bounda ry\, and how this unique property is driving a new generation of ultra-com pact electromagnetic and optical devices with unparalleled field control. Metasurfaces will be described that exhibit various field tailoring capabi lities including multiwavelength and multifunctional performances and extr eme field shaping. In addition\, metasurfaces with multi-input to multi-ou tput capabilities will be presented that open new opportunities in adaptiv e and trainable designs.

Biography: Anthony Grbic received the B.A .Sc.\, M.A.Sc.\, and Ph.D. degrees in electrical engineering from the Univ ersity of Toronto\, Canada\, in 1998\, 2000\, and 2005\, respectively. In 2006\, he joined the Department of Electrical Engineering and Computer Sci ence\, University of Michigan\, Ann Arbor\, MI\, USA\, where he is current ly a Professor. His research interests include engineered electromagnetic structures (metamaterials\, metasurfaces\, electromagnetic band-gap materi als\, frequency-selective surfaces)\, microwave circuits\, antennas\, plas monics\, wireless power transmission\, and analytical electromagnetics/opt ics.

Anthony Grbic has made pioneering contributions to the theory and development of electromagnetic metamaterials and metasurfaces: finely textured\, engineered electromagnetic structures/surfaces that offer unpre cedented wavefront control. Dr. Grbic is a Fellow of the IEEE. He is curre ntly an IEEE Microwave Theory and Techniques Society Distinguished Microwa ve Lecturer (2022-2025). He is also serving on the IEEE Antennas and Propa gation Society (AP-S) Field Awards Committee and IEEE Fellow Selection Com mittee. From 2018 to 2021\, he has served as a member of the Scientific Ad visory Board\, International Congress on Artificial Materials for Novel Wa ve Phenomena – Metamaterials. In addition\, he has been Vice Chair of Tech nical Activities for the IEEE Antennas and Propagation Society\, Chapter I V (Trident)\, IEEE Southeastern Michigan Section\, from Sept. 2007 – 2021. From July 2010 to July 2015\, he was Associate Editor for the rapid publi cation journal IEEE Antennas and Wireless Propagation Letters. Prof Grbic was Technical Program Co-Chair in 2012 and Topic Co-Chair in 2016 and 2017 for the IEEE International Symposium on Antennas and Propagation and USNC -URSI National Radio Science Meeting. Dr. Grbic was the recipient of AFOSR Young Investigator Award as well as NSF Faculty Early Career Development Award in 2008\, the Presidential Early Career Award for Scientists and Eng ineers in January 2010. He also received an Outstanding Young Engineer Awa rd from the IEEE Microwave Theory and Techniques Society\, a Henry Russel Award from the University of Michigan\, and a Booker Fellowship from the U nited States National Committee of the International Union of Radio Scienc e in 2011. He was the inaugural recipient of the Ernest and Bettine Kuh Di stinguished Faculty Scholar Award in the Department of Electrical and Comp uter Science\, University of Michigan in 2012. In 2018\, Prof. Anthony Grb ic received a 2018 University of Michigan Faculty Recognition Award for ou tstanding achievement in scholarly research\, excellence as a teacher\, ad visor and mentor\, and distinguished service to the institution and profes sion. In 2021\, he was selected as 1 of 5 finalists worldwide for the A.F. Harvey Engineering Research Prize\, for his pioneering contributions to f ield of electromagnetic metamaterials. The A.F. Harvey Prize is the Instit ution of Engineering and Technology’s (IET’s) most valuable prize fund.

COURSE DESCRIPTION

Course Kick-off / Orientation Thursday\, April 18\, 6:00PM – 6:30PM.

Live Workshops: 6:00PM – 7:30PM\, Thu rsdays\, April 25\, May 2\, 9\, 16\, 23

Registration is open throug h the last live workshop date. Live workshops are recorded for later use.

Attendees will have access to the recorded session and exercises f or two months (until July 23\, 2024) after the live session ends!

S peaker: Dan Boschen

IEEE Member Fee (by April 11th) : $190.00

IEEE Member Fee (after April 11th): $285.00

IEEE Non-Member Fee (by April 11th): $210.00

IEEE Non-Member Fee (afte r April 11th) $315.00

Decision to run/cancel course: Friday\, Apri l 12\, 2024

COURSE DESCRIPTION

New Format Combining Live Workshops with Pre-recorded Video

Th is is a hands-on course providing pre-recorded lectures that students can watch on their own schedule and an unlimited numb er of times prior to live Q&A/Workshop sessions with the instruct or. Ten 1.5 hour videos released 2 per week while the course is in session will be available for up to two months after the conclusion of the course .

Course Summary

This course is a fresh vie w of the fundamental and practical concepts of digital signal processing a pplicable to the design of mixed signal design with A/D conversion\, digit al filters\, operations with the FFT\, and multi-rate signal processing. This course will build an intuitive understanding of the underlying mathematics through the use of graphics\, visual demonstrations \, and applications in GPS and mixed signal (analog/digital) modern transc eivers. This course is applicable to DSP algorithm development with a focu s on meeting practical hardware development challenges in both the analog and digital domains\, and not a tutorial on working with specific DSP proc essor hardware.

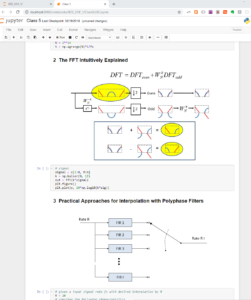

Now with Jupyter Notebooks!

This long-running IEEE Cours e has been updated to include Jupyter Notebooks which incorporates graphic s together with Python simulation code to provide a “take -it-with-you” interactive user experience. No knowledge of Python is requi red but the notebooks will provide a basic framework for proceeding with f urther signal processing development using that tools for those that have interest in doing so.

This course will not be teaching Python\, but using it for demonstration. A more detailed course on Python itself is co vered in a separate IEEE Course “Python Applications for Digital Design an d Signal Processing”.

Students will be encouraged but not required to load all the Python tools needed\, and all set-up information for insta llation will be provided prior to the start of class.

Targe t Audience:

All engineers involved in or interested in sig nal processing applications. Engineers with significant experience with DS P will also appreciate this opportunity for an in-depth review of the fund amental DSP concepts from a different perspective than that given in a tra ditional introductory DSP course.

Benefits of Attending/ Go als of Course:

Attendees will build a stronger intuitive u nderstanding of the fundamental signal processing concepts involved with d igital filtering and mixed signal analog and digital design. With this\, a ttendees will be able to implement more creative and efficient signal proc essing architectures in both the analog and digital domains. The knowledge gained from this course will have immediate practical value for any work in the signal processing field.

Topics / Schedule:

Pre-recorded lectures: (3 hours each) will be distributed Friday prior to each week’s workshop dates. Workshop/Q&A Sessions are 6 – 7:30PM on the dates listed below.

Kick-off / Orientation: Thursday\, Apr il 18\, 2024

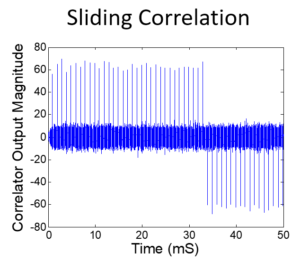

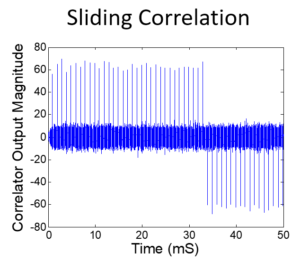

Class 1: April 25\, 2024: Correlation\, Fourier Tran sform\, Laplace Transform

Class 2: May 2\, 2024: Sampling and A/D Conversion\, Z –transform\, D/A Conversion

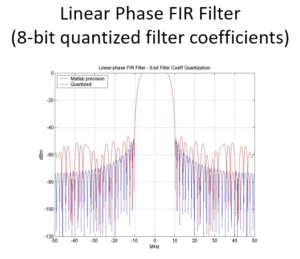

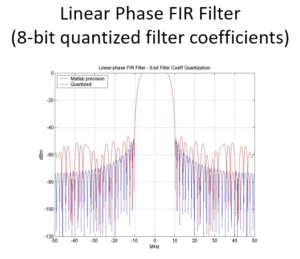

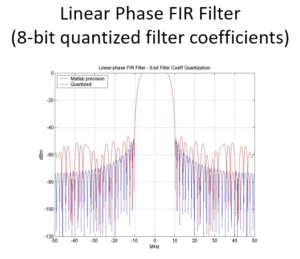

Class 3: May 9\, 2024: IIR and FIR Digital filters\, Direct Fourier Transform

Class 4: May 16\, 2024: May Windowing\, Digital Filter Design\, Fixed Point vs Flo ating Point

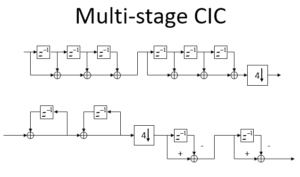

Class 5: May23\, 2024: Fast Fourier Transform\, Multi -rate Signal Processing\, Multi-rate Filters

Speaker’s Bio :

Dan Boschen has a MS in Communications and Signal Proces sing from Northeastern University\, with over 25 years of experience in sy stem and hardware design for radio transceivers and modems. He has held va rious positions at Signal Technologies\, MITRE\, Airvana and Hittite Micro wave designing and developing transceiver hardware from baseband to antenn a for wireless communications systems. Dan is currently at Microchip (form erly Microsemi and Symmetricom) leading design efforts for advanced freque ncy and time solutions.

For more background information\, please vi ew Dan’s Linked-In page at: http://www.linkedin.com/in/danboschen

Registration is open th rough the last live workshop date. Live workshops are recorded for later use.

COURSE DESCRIPTION

Course Kick-off / Orientation Thursday\, Apr il 18\, 6:00PM – 6:30PM.

Live Workshops: 6:00PM – 7:30PM\, Thursda ys\, April 25\, May 2\, 9\, 16\, 23

Registration is open through th e last live workshop date. Live workshops are recorded for later use.

Attendees will have access to the recorded session and exercises for t wo months (until July 23\, 2024) after the live session ends!

Speak er: Dan Boschen

IEEE Member Fee (by April 11th): $ 190.00

IEEE Member Fee (after April 11th): $285.00

IEEE Non -Member Fee (by April 11th): $210.00

IEEE Non-Member Fee (after Ap ril 11th) $315.00

Decision to run/cancel course: Friday\, April 12 \, 2024

COURSE DESCRIPTION

New Form at Combining Live Workshops with Pre-recorded Video

This i s a hands-on course providing pre-recorded lectures that students can watc h on their own schedule and an unlimited number o f times prior to live Q&A/Workshop sessions with the instructor. Ten 1.5 hour videos released 2 per week while the course is in session wil l be available for up to two months after the conclusion of the course.

Course Summary

This course is a fresh view of the fundamental and practical concepts of digital signal processing appli cable to the design of mixed signal design with A/D conversion\, digital f ilters\, operations with the FFT\, and multi-rate signal processing. This course will build an intuitive understanding of the unde rlying mathematics through the use of graphics\, visual demonstrations\, a nd applications in GPS and mixed signal (analog/digital) modern transceive rs. This course is applicable to DSP algorithm development with a focus on meeting practical hardware development challenges in both the analog and digital domains\, and not a tutorial on working with specific DSP processo r hardware.

Now with Jupyter Notebooks!

This long-running IEEE Course ha s been updated to include Jupyter Notebooks which incorporates graphics to gether with Python simulation code to provide a “take-it- with-you” interactive user experience. No knowledge of Python is required but the notebooks will provide a basic framework for proceeding with furth er signal processing development using that tools for those that have inte rest in doing so.

This course will not be teaching Python\, but usi ng it for demonstration. A more detailed course on Python itself is covere d in a separate IEEE Course “Python Applications for Digital Design and Si gnal Processing”.

Students will be encouraged but not required to l oad all the Python tools needed\, and all set-up information for installat ion will be provided prior to the start of class.

Target Au dience:

All engineers involved in or interested in signal processing applications. Engineers with significant experience with DSP wi ll also appreciate this opportunity for an in-depth review of the fundamen tal DSP concepts from a different perspective than that given in a traditi onal introductory DSP course.

Benefits of Attending/ Goals of Course:

Attendees will build a stronger intuitive under standing of the fundamental signal processing concepts involved with digit al filtering and mixed signal analog and digital design. With this\, atten dees will be able to implement more creative and efficient signal processi ng architectures in both the analog and digital domains. The knowledge gai ned from this course will have immediate practical value for any work in t he signal processing field.

Topics / Schedule:

Pre-recorded lectures: (3 hours each) will be distributed Friday prio r to each week’s workshop dates. Workshop/Q&A Sessions are 6 – 7:30PM on the dates listed below.

Kick-off / Orientation: Thursday\, April 1 8\, 2024

Class 1: April 25\, 2024: Correlation\, Fourier Transfor m\, Laplace Transform

Class 2: May 2\, 2024: Sampling and A/D Con version\, Z –transform\, D/A Conversion

Class 3: May 9\, 2024: II R and FIR Digital filters\, Direct Fourier Transform

Class 4: May 16\, 2024: May Windowing\, Digital Filter Design\, Fixed Point vs Floatin g Point

Class 5: May23\, 2024: Fast Fourier Transform\, Multi-rat e Signal Processing\, Multi-rate Filters

Speaker’s Bio:

Dan Boschen has a MS in Communications and Signal Processing from Northeastern University\, with over 25 years of experience in system and hardware design for radio transceivers and modems. He has held variou s positions at Signal Technologies\, MITRE\, Airvana and Hittite Microwave designing and developing transceiver hardware from baseband to antenna fo r wireless communications systems. Dan is currently at Microchip (formerly Microsemi and Symmetricom) leading design efforts for advanced frequency and time solutions.

For more background information\, please view D an’s Linked-In page at: ht tp://www.linkedin.com/in/danboschen

Registration is open throug h the last live workshop date. Live workshops are recorded for later use.

COURSE DESCRIPTION

Course Kick-off / Orientation Thursday\, April 1 8\, 6:00PM – 6:30PM.

Live Workshops: 6:00PM – 7:30PM\, Thursdays\, April 25\, May 2\, 9\, 16\, 23

Registration is open through the la st live workshop date. Live workshops are recorded for later use.

Attendees will have access to the recorded session and exercises for two m onths (until July 23\, 2024) after the live session ends!

Speaker: Dan Boschen

IEEE Member Fee (by April 11th): $190. 00

IEEE Member Fee (after April 11th): $285.00

IEEE Non-Mem ber Fee (by April 11th): $210.00

IEEE Non-Member Fee (after April 11th) $315.00

Decision to run/cancel course: Friday\, April 12\, 2 024

COURSE DESCRIPTION

New Format C ombining Live Workshops with Pre-recorded Video

This is a

hands-on course providing pre-recorded lectures that students can watch on their own schedule and an unlimited number of ti

mes prior to live Q&A/Workshop sessions with the instructor. Ten

1.5 hour videos released 2 per week while the course is in session will be

available for up to two months after the conclusion of the course.

Course Summary

This course is a fresh view of the fundamental and practical concepts of digital signal processing applicabl e to the design of mixed signal design with A/D conversion\, digital filte rs\, operations with the FFT\, and multi-rate signal processing. This cou rse will build an intuitive understanding of the underlyi ng mathematics through the use of graphics\, visual demonstrations\, and a pplications in GPS and mixed signal (analog/digital) modern transceivers. This course is applicable to DSP algorithm development with a focus on mee ting practical hardware development challenges in both the analog and digi tal domains\, and not a tutorial on working with specific DSP processor ha rdware.

Now with Jupyter Notebooks!

This long-running IEEE Course has be en updated to include Jupyter Notebooks which incorporates graphics togeth er with Python simulation code to provide a “take-it-with -you” interactive user experience. No knowledge of Python is required but the notebooks will provide a basic framework for proceeding with further s ignal processing development using that tools for those that have interest in doing so.

This course will not be teaching Python\, but using i t for demonstration. A more detailed course on Python itself is covered in a separate IEEE Course “Python Applications for Digital Design and Signal Processing”.

Students will be encouraged but not required to load all the Python tools needed\, and all set-up information for installation will be provided prior to the start of class.

Target Audien ce:

All engineers involved in or interested in signal proc essing applications. Engineers with significant experience with DSP will a lso appreciate this opportunity for an in-depth review of the fundamental DSP concepts from a different perspective than that given in a traditional introductory DSP course.

Benefits of Attending/ Goals of C ourse:

Attendees will build a stronger intuitive understan ding of the fundamental signal processing concepts involved with digital f iltering and mixed signal analog and digital design. With this\, attendees will be able to implement more creative and efficient signal processing a rchitectures in both the analog and digital domains. The knowledge gained from this course will have immediate practical value for any work in the s ignal processing field.

Topics / Schedule:

Pre-recorded lectures: (3 hours each) will be distributed Friday prior to each week’s workshop dates. Workshop/Q&A Sessions are 6 – 7:30PM on the dates listed below.

Kick-off / Orientation: Thursday\, April 18\, 2024

Class 1: April 25\, 2024: Correlation\, Fourier Transform\, Laplace Transform

Class 2: May 2\, 2024: Sampling and A/D Convers ion\, Z –transform\, D/A Conversion

Class 3: May 9\, 2024: IIR an d FIR Digital filters\, Direct Fourier Transform

Class 4: May 16\, 2024: May Windowing\, Digital Filter Design\, Fixed Point vs Floating Po int

Class 5: May23\, 2024: Fast Fourier Transform\, Multi-rate Si gnal Processing\, Multi-rate Filters

Speaker’s Bio:

Dan Boschen has a MS in Communications and Signal Processing fro m Northeastern University\, with over 25 years of experience in system and hardware design for radio transceivers and modems. He has held various po sitions at Signal Technologies\, MITRE\, Airvana and Hittite Microwave des igning and developing transceiver hardware from baseband to antenna for wi reless communications systems. Dan is currently at Microchip (formerly Mic rosemi and Symmetricom) leading design efforts for advanced frequency and time solutions.

For more background information\, please view Dan’s Linked-In page at: http:/ /www.linkedin.com/in/danboschen

Registration is open through th e last live workshop date. Live workshops are recorded for later use.

\n

IEEE Boston Section recognized for Excellence in Membership Recruitmen t Performance

\n\n

IEEE Boston Section was founded Feb 13\, 1903\, and serves more than 8\,500 members of the IEEE. There are 29 chapt ers and affinity groups covering topics of interest from Aerospace & Elect ronic Systems\, to Entrepreneur Network to Women in Engineering to Young P rofessionals. The chapters and affinity groups organize more than 100 meet ings a year. In addition to the IEEE organization activities\, the Boston Section organizes and sponsors up to seven conferences in any given year\, as well as more than 45 short courses. The Boston Section publishes a bi- weekly newsletter and\, currently\, a monthly Digital Reflector newspaper included in IEEE membership.

\nThe I EEE Boston Section also offers social programs such as the section annual meeting\, Milestone events\, and other non-technical professional activiti es to round out the local events. The Section also hosts one of the larges t and longest running entrepreneurial support groups in IEEE.

\nMore than 150 volunteers help create and coordinate events throughout the year .

\n\n